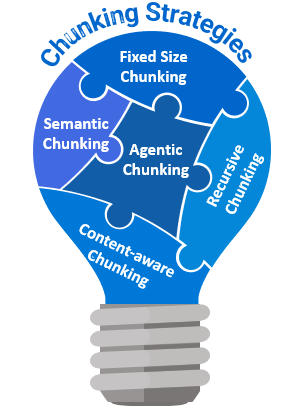

1. Fixed Size Chunking

Fixed-size chunking involves dividing text into segments of a predetermined size, such as 100 words or 200 characters. This technique can optionally incorporate overlaps between chunks to preserve context. Known for its computational efficiency and straightforward implementation, fixed-size chunking is widely used in natural language processing (NLP) applications, particularly for texts that are relatively uniform where maintaining context across boundaries is less critical. Its simplicity and effectiveness make it one of the most common chunking methods.

2. Recursive Chunking

Fixed-size chunking is straightforward to implement but overlooks the inherent structure of text. Recursive chunking offers an alternative. In contrast, recursive chunking offers a more sophisticated approach by iteratively and hierarchically dividing text into smaller segments based on various separators or criteria. If the first attempt at chunking doesn’t yield the desired sizes, the method recursively applies itself to the resulting segments with different separators or criteria until the target size is achieved, while acknowledging that the exact sizes may still vary. It exemplifies this by iteratively breaking down text into smaller segments to achieve uniformity in size, while acknowledging that the exact sizes may still vary. This adaptability makes Recursive Character Chunking particularly effective for texts with diverse formats and preserving contextual integrity for complex texts.

3. Content-aware Chunking (Document Specific Chunking)

Document-specific chunking is a strategy that creates content chunks by recognizing the inherent structure of a document, such as paragraphs and sections. By aligning chunks with logical divisions, this method preserves coherence. It is particularly effective for structured formats like Markdown and HTML, as it enhances the relevance and usability of the retrieved information, ensuring that the text remains coherent and well-organized.

4. Semantic Chunking

Semantic Chunking is a method that organizes text into meaningful, semantically complete sections based on the relationships between ideas. Each section, or "chunk," represents a cohesive concept, ensuring the integrity of the information for accurate retrieval and generation. Although this approach is slower and more computationally intensive, it is ideal for natural language processing (NLP) tasks that require high semantic accuracy, such as summarization and detailed question answering. The "SemanticChunker" tool enhances this process by dynamically selecting breakpoints between sentences or paragraphs based on the similarity of their embeddings. This ensures that each chunk comprises semantically related sentences, further improving the coherence of the information. By focusing on the meaning and context within the text, Semantic Chunking significantly enhances the quality of information retrieval, making it an excellent choice when preserving semantic integrity is crucial.

5. Agentic Chunking

Agentic Chunking is an advanced technique that leverages large language models (LLMs) to optimize the size and content of text segments based on contextual analysis. By examining the information, the LLM identifies ideal segmentation points that enhance its understanding and performance. This strategy mimics human document processing, aiming to automate the way humans chunk information. The Agentic Chunking approach is particularly effective for managing complex and dynamic documents, where a nuanced understanding of context is beneficial. By employing this method, we can achieve a more human-like grasp of text organization and improve overall processing efficiency.